Automated UI testing with Cypress

It’s been more than a year-and-a-half since we started using Cypress for our automated functional testing, and it has been well worth the investment. Cypress has now become an essential part of our process to automate regression testing, which helps us ship new releases faster, with increased quality.

Getting started with Cypress is fun and easy. But as we added more scripts with varying requirements, we faced several setbacks and hurdles, such as flaky tests, which slow down our efforts in automating test cases. This resulted in a shift in our focus and time maintaining the test scripts itself, so we decided to reevaluate our strategy. We came up with guidelines and best practices for using Cypress, which are what I’m going to share with you in this blog.

Here at Mattermost, we have many types and stages of testing, such as unit, integration, load, performance, and end-to-end (E2E) for UI functional, REST API, and system. For the purpose of this article, I will focus only on E2E, specifically user interface (UI) and functional testing, which typically run the entire application—both server and web application—with the test script written in Cypress that interacts like a typical user would.

E2E test setup

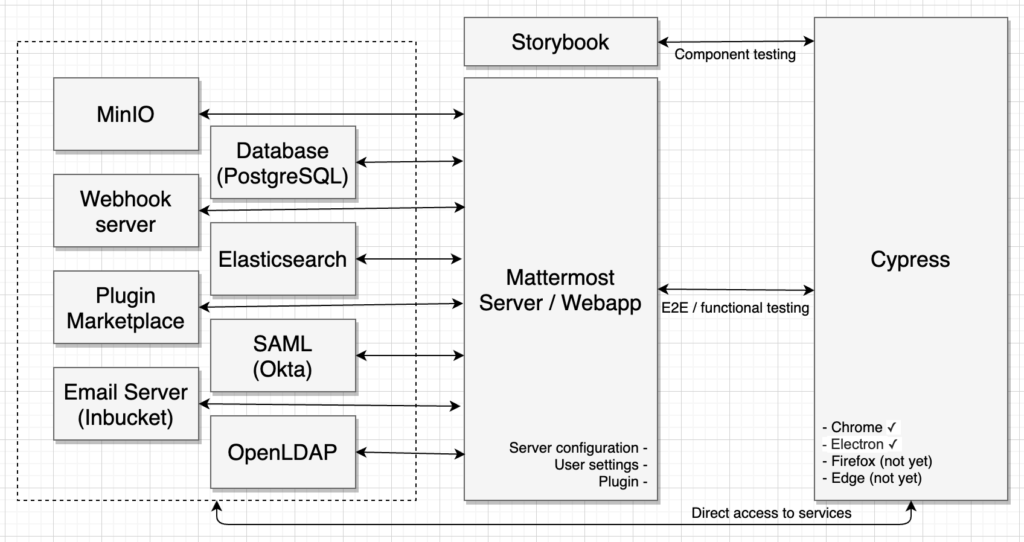

Before we dive into the guidelines and best practices, let’s take a look at our E2E test setup first, which is detailed in the following illustration:

The Mattermost server and web application, which we’ll refer to as “server,” is spun up with all the required services, such as PostgreSQL, Elasticsearch, SAML/Okta, OpenLDAP, MinIO, the Webhook server, the Plugin Marketplace, and the Email server. Once the server is ready, Cypress will interact with it as a user and initiate actions and verify results. In some cases, it directly accesses the services to set up or verify information. There is also a separate static website generated from Storybook that is used to check the functionality of a component from the web application.

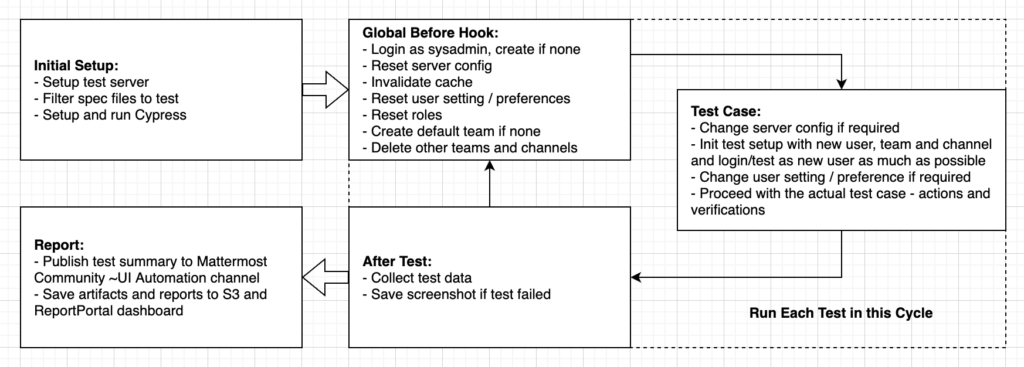

Typical test execution lifecycle

The illustration above shows the test execution lifecycle of our E2E tests. It starts with the initial setup where the test environment preparation happens, such as spinning the test server. Once that’s done, it tests each test file until all are completed. Finally, it consolidates each individual report and artifact, saves that into an AWS S3 bucket and ReportPortal dashboard, and publishes a test summary to our community channel.

General tips

Now that we have an idea on how we set up and execute E2E tests with Cypress, let me share some of the guidelines and best practices we learned and formulated towards a happy path for developers and contributors, including test scripts as they develop features and fix bugs, and for the quality assurance (QA) team to easily capture regressions during nightly build and release testing.

1. Reset before test

This is true both for server and user settings. In the past, we used to prepare test requirements in the test file only. During that time, changes in settings are easy to track and have minimal UI effect.

However, it didn’t work well. As we developed more and more features, we were bitten many times by it. With that, we made sure in the global before hook that the server and the user, specifically the sysadmin, are in a predetermined state before testing.

2. Isolate test

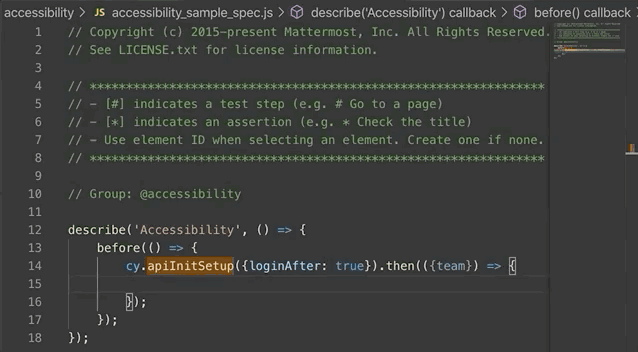

When using known test data like users, teams, and channels, test cases are making changes to that data, which is fine. However, this caused a lot of pain to prepare the state for the next test, so we decided to prevent sharing test data per test file by using a convenient custom command: cy.apiInitSetup. This command automatically creates test data:

cy.apiInitSetup({loginAfter: true}).then(({team}) => {

cy.visit(`/${team.name}/channels/town-square`);

});

3. Organize custom commands

Cypress custom commands are beneficial for automating a workflows that are repeated in tests over and over again. You may use it to override or extend the behavior of built-in commands or to create a new one and take advantage of Cypress internals it comes with.

However, this can get easily out of control, hard to discover, and hard to avoid adding similar or duplicate commands. As of this writing, we have almost 200 commands and the following guidelines helped us level up organization properly:

- Do specific things as the name suggests.

- Organize by folder and file, especially with the bulk of API commands where we structured based on how the API reference is set up.

- Standard naming convention by adding prefix to denote something.

- Example:

cy.apiLogin(user)means user login is done directly via REST API, andcy.uiChangeMessageDisplaySetting(display)means changing the message display setting is done via UI workflow.

- Example:

- Make it discoverable through autocompletion and intellisense by adding type definitions for each custom commands with comments for in-code documentation.

4. Be explicit in the test block

Classic examples are user login or URL redirection which were previously done in places like custom commands or helper functions located in a separate file.

Instead, such actions should be done explicitly in the test block itself. That way, you can easily follow the workflow and avoid surprises about why a page suddenly redirected into an unexpected URL or a client session being removed or replaced by another user.

5. Avoid dependency of test block from another test block

In cases where a test file has several test blocks, each test block or it() should be independent from each other. Appending exclusivity (.only()) or inclusivity (.skip) should work normally and should not rely on state generated from other tests. This will make individual test verification faster and deterministic. Cypress has a section that explains it in detail.

6. Avoid nested blocks

This is specifically about the describe block which is used for grouping tests. It’s good to arrange things this way when all your tests are passing. Even with a failing test, it might still be good to arrange this way when all of the nested blocks don’t have any hook such as before or beforeEach, as it may run the tests and execute continuously from start to finish.

On the other hand, the nightmare happens when some or all of the nested blocks have hooks and the assertion fails inside of it. The effect to succeeding tests is either automatically skipped or may fail due to an unexpected state.

describe('Parent', () => {

before(() => {/** Do test preparation */});

describe('Child', () => {

beforeEach(() => {

// Do test preparation for child

// Note: if the test failed here,

// succeeding tests are skipped or

// may fail too due to unexpected state.

});

it('test 1', () => {...});

it('test 2', () => {...});

});

describe('Another child', () => {

before(() => {/** Do test preparation for another child */});

it('test 1', () => {...});

it('test 2', () => {...});

describe('Grandchild', () => {

beforeEach(() => {/** Do test preparation for grandchild */});

it('test a', () => {...});

it('test b', () => {...});

});

});

});

One option to avoid this and still maintain test grouping is to break it into several test files and organize them in a folder. It’s a trade-off between readability and organization against maintainability and a chance to run each test as much as possible.

7. Avoid unnecessary waiting

Cypress has a section that explains this in detail and lists workarounds when you find yourself needing it. Explicit wait makes the test flaky or longer than usual.

On top of what was explained, we’re using cypress-wait-until under the hood that makes it easier to wait for a certain subject. You’ll find custom commands like cy.uiWaitUntilMessagePostedIncludes which is sometimes used to wait for a system message to get posted before making an assertion.

8. Add comments for each action and verification

During pull request (PR) review, test scripts are normally reviewed by technical (e.g., developer) and non-technical (e.g., QA analyst) staff. Though the code is readable and comprehensible, the convention for adding comments helps everyone align on what is going on in the test script. The non-technical staff could easily verify whether the written test script corresponds to the actual test case without trying to get around within the code itself.

// # Post a message in a test channel by another user

cy.postMessageAs({sender: anotherUser, message: 'from another user', channelId: testChannel.id});

// # Go to off-topic channel via LHS and post a message

cy.get('#sidebarItem_off-topic').click();

const message = 'Hello';

cy.postMessage(message);

cy.uiWaitUntilMessagePostedIncludes(message);

// # Go to test channel where the first message is posted

cy.get(`#sidebarItem_${testChannel.name}`).click();

// * Check that the new message separator is visible

cy.findByTestId('NotificationSeparator').should('be.visible').within(() => {

cy.findByText('New Messages').should('be.visible');

});

9. Selectively run tests based on metadata

There are cases where we don’t need to run the entire test suite. The test environment, browser, or release version might not be supported by a certain test case, for example. Or simply, the written test is not stable enough for production.

Unfortunately, Cypress doesn’t have this capability. With that, we implemented a node script so we can run tests selectively. Start by adding metadata, as we call it, in a test file:

// Stage: @prod

// Group: @accessibility

Then, simply initiating node run_tests.js --stage='@prod' --group='@accessibility will run production tests for accessibility groups.

To be sure, there are lots of best practices out there which are not mentioned here. But I hope our guidelines and best practices helps anyone reading this—especially for those who want to get started with Cypress or set up an automated UI testing framework in general.

Conclusion

The setbacks and hurdles we experienced are not necessarily limitations in the Cypress test framework, but manageable through organization and best practices. We are glad to have it as part of our automated UI testing and very much thankful to Cypress for creating a tool that helps make writing E2E enjoyable.

Finally, I’d like to thank all the contributors who shaped and helped set up our E2E and put us where we are today.

All E2E contributors and their number of contributions (as of this writing): Abdulrahman (Abdu) Assabri (5), Abraham Arias (6), Adzim Zul Fahmi (3), Agniva De Sarker (1), Alejandro García Montoro (5), Allen Lai (3), Andre Vasconcelos (1), Anindita Basu (2), Arjun Lather (3), Asaad Mahmood (2), Ben Schumacher (2), bnoggle (1), Bob Lubecker (32), Brad Angelcyk (1), Brad Coughlin (3), catalintomai (2), cdncat (1), Christopher Poile (1), Clare So (2), Claudio Costa (3), Clément Collin (1), composednitin (1), Cooper Trowbridge (2), Courtney Pattison (2), d28park (2), Daniel Espino García (24), David Janda (1), Devin Binnie (5), Donald Feury (1), Eli Yukelzon (5), Farhan Munshi (7), Guillermo Vayá (5), Harrison Healey (14), Hossein Ahmadian-Yazdi (10), Hyeseong Kim (1), Jesse Hallam (6), Jesús Espino (2), Jonathan Rigsby (2), Jorde G (1), Joseph Baylon (68), Kelvin Tan YB (1), kosgrz (2), lawikip (2), m3phistopheles (1), Marc Argent (3), Maria A Nunez (1), Mario de Frutos Dieguez (3), Martin Kraft (11), Matthew Shirley (1), Md Zubair Ahmed (11), Md_ZubairAhmed (1), metanerd (1), Miguel Alatzar (1), NiroshaV (1), oliverJurgen (2), Patrick Kang (1), Pradeep Murugesan (1), Prapti (4), Rob Stringer (1), Rohitesh Gupta (49), Romain Maneschi (3), Sam Wolfs (1), Sapna Sivakumar (3), Saturnino Abril (160), Scott Bishel (5), Shota Gvinepadze (1), sij507 (7), Soo Hwan Kim (2), sourabkumarkeshri (1), Sudheer (7), syuo7 (1), Takatoshi Iwasa (1), Tomas Hnat (1), Tsilavina Razafinirina (3), Valentijn Nieman (3), VishalSwarnkar (3), Vladimir Lebedev (11), VolatianaYuliana (2), Walmyr (1), 興怡 (1)

Ready to get started with Cypress?

If Cypress sounds interesting to you, please join us for Cypress Test Automation Hackfest which runs through August and win an exclusive Mattermost swag bag!