Start using GPT-4 and local models with the open source OpenOps framework

Organizations — particularly those with high security and compliance requirements — need a customizable way to explore and innovate with artificial intelligence and other new technologies.

This is why we’re happy to introduce OpenOps, an open source platform designed to help organizations experiment with AI in a secure environment they control. (Note: OpenOps is not an actively supported project, but the repository remains for historical purposes. To learn more about Mattermost’s latest AI capabilities, check out Mattermost Copilot.)

OpenOps is a framework of several tools that enables you to test open source AI models in a sandbox. Once deployed, you can experiment with OpenOps to establish the best workflow practices for your team before rolling out AI to more folks.

In other words, OpenOps is both a screening process and a workshop for AI, all in one place.

Under the hood, the OpenOps framework includes:

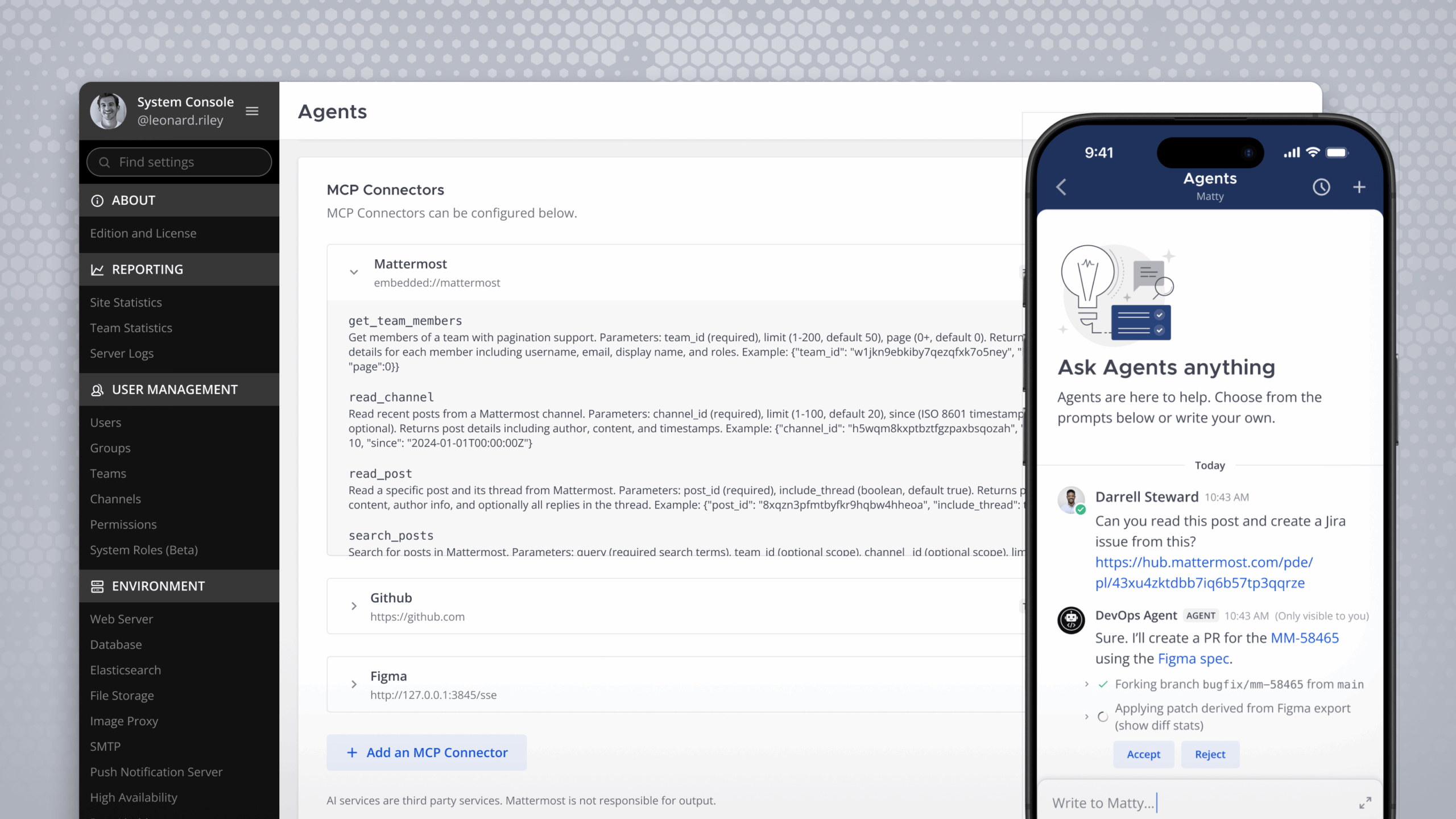

- Mattermost, your hub for messaging, automation, integrations, audio calling, and screensharing

- The Mattermost AI plugin, an extension of Mattermost for generative AI, which is configurable for remote and local models

- Postgres, a database for securing private data from Mattermost

- Local AI, an open source API service for large language models (LLMs)

Set up the open source AI framework

First, navigate to the OpenOps repository in the Mattermost GitHub organization. For our purposes, we’ll be using the local install instructions from the README.

Inside this folder, there’s an init bash script, which is what starts your entire sandbox. It’s also going to initialize the Docker Compose that has your database and Mattermost instance. If you’re using local models, it’s going to include local AI. It’s also going to install the latest Mattermost AI plugin to your local deployment and create your team, channel, and admin user so that you can access Mattermost and easily speed passed all of the onboarding so you can get straight to development.

To run this script, we need to provide an environmental variable for the backend. In this case, we’re configuring it for OpenAI because we’re going to use ChatGPT.

Run this command in your terminal. It’s going to start your Docker Compose and then run through the script, installing everything for you and abstracting the starting process so you can get right in the pilot seat.

Now that the init bash script has finished running, you’ll be presented with the URL that you can use to log into Mattermost. It’s going to drop you into a direct message with the AI bot so that you can communicate with it from the very beginning.

But first, you’ll also need to run this configure OpenAI bash script and provide it with your OpenAI API key. This is going to configure the plugin locally on our machine. You will also be able to do this in the System Console when you log in to your local Mattermost deployment.

OpenOps use cases

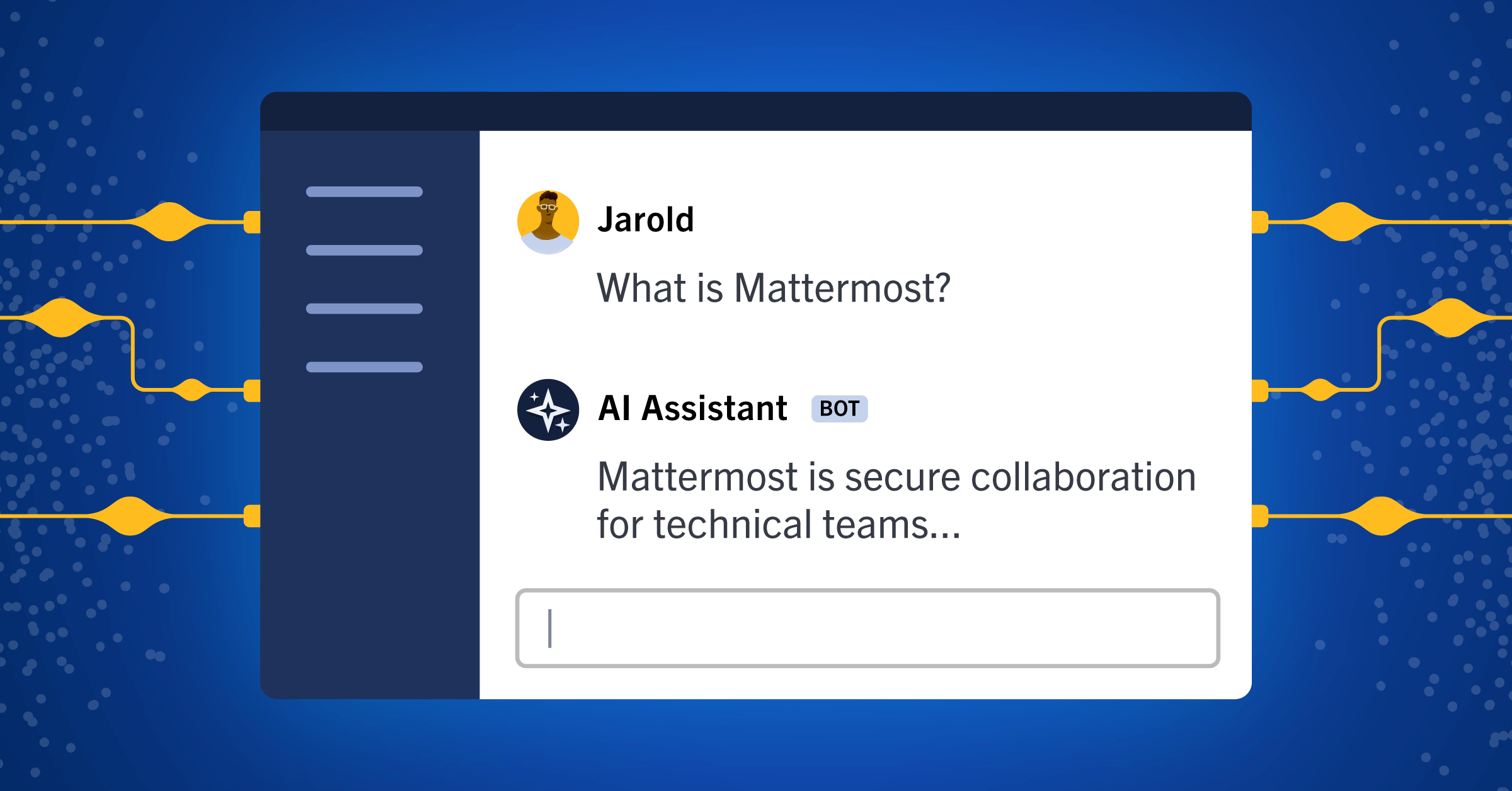

Next, copy your password and navigate to the URL provided. Log in as your root user with the password. As mentioned, you’ll end up in a direct message with your AI assistant, which you can begin talking to directly.

The AI is ready to respond to your commands and you can now explore the functionality provided by the Mattermost AI plugin. You can also begin thinking about new ways to integrate and use the AI with the conversations that are happening with the team. You’re now in a self-hosted, multi-user, open source operational hub with tooling for chat, automation, and now AI with a database where all of your conversations are secured under your control.

Pretty cool, isn’t it? And this is just the starting point.

Now that you have a sample environment working, it’s time to explore some possible use cases. For this tutorial, we have set up an example server with OpenOps to demonstrate some of its functionality. It has messages that are coming in from webhooks and other sources like Zapier and GitHub. It also has channels where conversations are happening between real people talking about the work that they’re doing.

This is a great environment to demonstrate some of the features that come with the Mattermost AI plugin included with OpenOps.

Streaming conversations

The first use case we’ll explore is streaming conversations back from the bot. You might have noticed is that when you ask the bot a question, it streams the response back to you. It doesn’t give you one message at the end; it streams the content live from the model — similar to the functionality that you might experience in other spaces where you’re using models naturally.

For example, we can ask the bot, “What is Mattermost?” The response it provides will not be a final response but instead the streamed response from the bot; you’ll be able to see it thinking and replying in real time. This is pretty similar to other AI experiences that you may have had while developing on other platforms.

Thread summarization

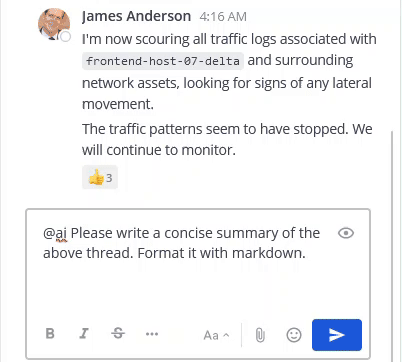

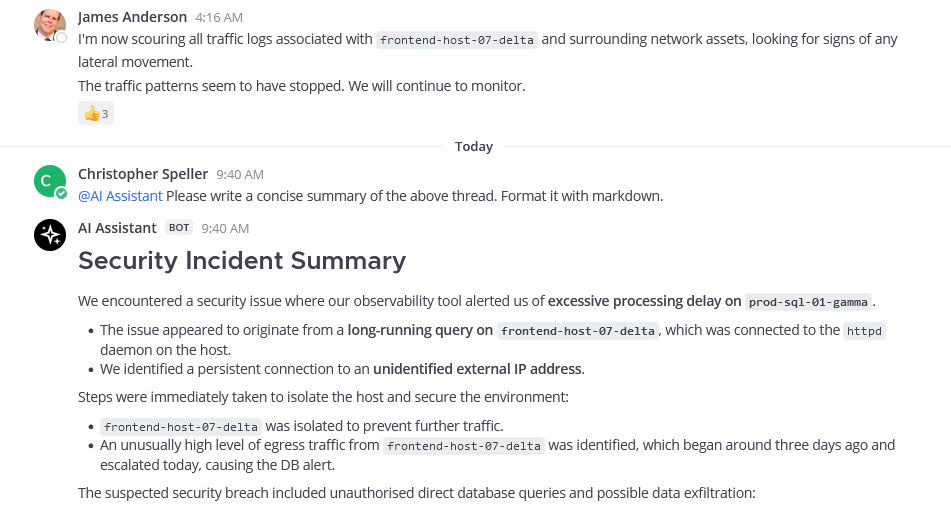

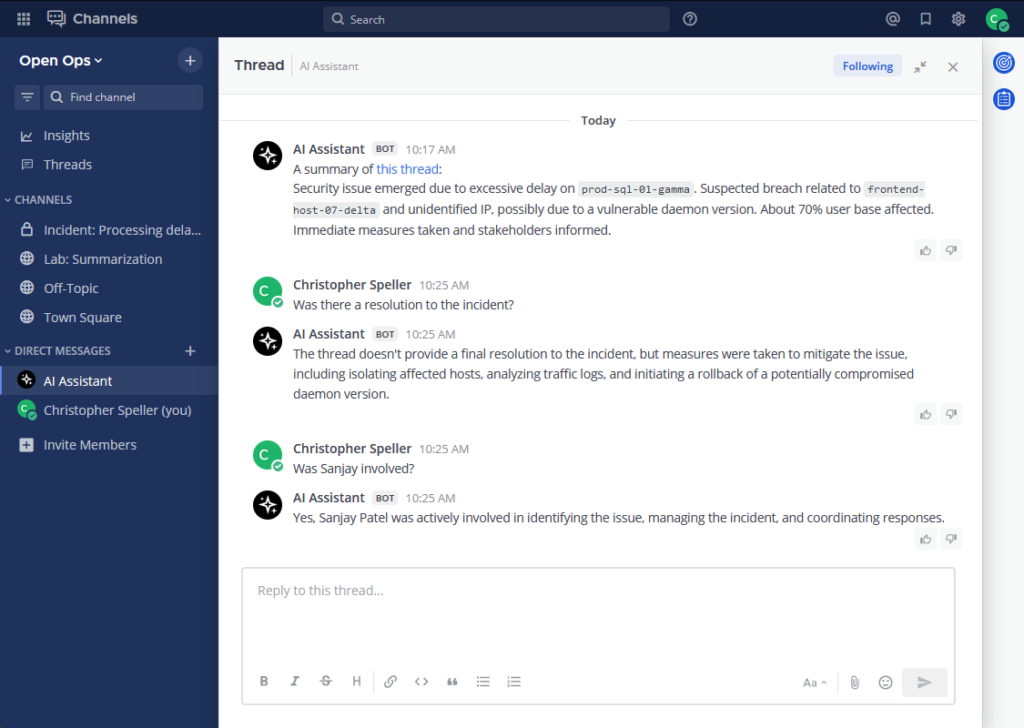

Any thread on Mattermost can also be summarized by the AI. When the AI summarizes it, the technology takes the context of who you are and users that to provide the information that’s most helpful to you with the summary.

For example, you can take a thread about making weekly public meetings using Calls and providing a transcript. Instead of having to read the long conversation end to end, you can ask the AI to summarize the thread for you.

Again, the response that comes back from the AI is a streamed response from the model, which provides an in-depth summary of what happened in the thread.

/summarize command, or simply ask the AI bot directly to get an AI-generated summary of the thread in a message.Contextual interrogation

Once it’s finished summarizing, you can ask follow-up questions or get further information from the AI. Should you do so, you’ll end up in a threaded conversation with the AI and can ask questions that are personalized for you.

For example, you might ask “Why would we use reg x?” If you didn’t understand why using a regular expression is useful in this case, the AI would provide context.

But this is just one example of the AI leveraging who you are in the context that you may have to give you a personalized response; this functionality could be further expanded because you’re interacting with Mattermost as a user.

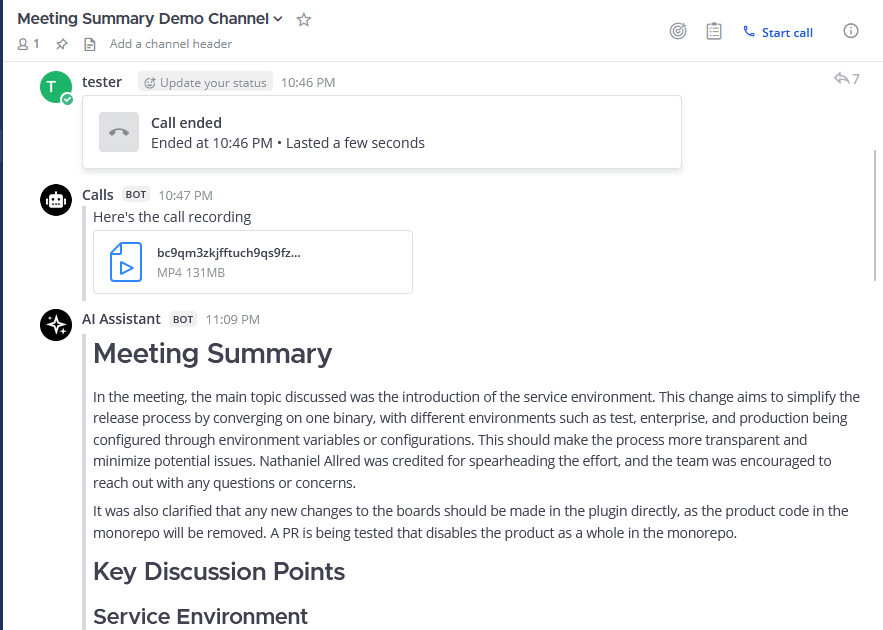

Meeting summarization

The AI could also receive, for example, the audio transcript from a Mattermost call and provide a summary to the channel where that call took place.

For example, the Mattermost weekly developer meeting, which is open and available to the public, is summarized automatically by AI, providing key points from the discussion and any follow-ups that may be relevant. That way, folks who didn’t attend the meeting or want to get additional context can read a summary to get an understanding of what was discussed without having to watch a recording or crawl through a transcript.

Reinforcement learning from human feedback

You may have noticed that the responses from the AI have a thumbs up and thumbs down at the bottom corner. Clicking these buttons enables you to provide feedback to the AI. So, in future iterations, the AI could take the response given from you about whether a reply was helpful and use that to contextualize and improve itself moving forward.

Sentiment analysis

The AI can also understand the sentiment of any piece of text so you can use that to have it react to something for you. For example, if you’re talking about a possible solution, the AI might react with a pondering emoji because it understands what’s happening in conversations.

AI also understands what’s happening in channels. If there’s a channel that’s streaming information from all of our GitHub repositories for Mattermost and there’s a lot of noise in there, you can use the AI to summarize what’s happening.

For example, you might prompt the AI to summarize the last 20 messages. It will then stream a response that summarizes what’s happening in all of those individual messages.

Get started with AI with Mattermost today!

Ultimately, these use cases are just the starting point.

To explore ways to integrate powerful new open source AI solutions within your organization, learn more about Mattermost Copilot.

To read more about the history of the old OpenOps project, check out the repository.