Mattermost’s QA journey with Rainforest and what we’ve learned so far

Here at Mattermost, our team of developers and quality assurance analysts are proud of what we build and work hard to ship a quality product on the 16th of each month. However, maintaining our high bar for quality month over month isn’t without its challenges!

The quality assurance team was faced with the problem of trying to ensure that every aspect of the product was tested and working as expected before each new release was shipped, while dealing with a constantly growing code base and product offering. This was proving to be an insurmountable task as the number of developers far outnumbers the number of quality assurance analysts.

In this post, I’ll share some of the testing challenges we’ve faced as Mattermost has grown, and how we’ve used Rainforest to help solve those challenges.

Scaling visual testing tasks over time

Mattermost, like any messaging platform, involves testing many features requiring visual confirmation that UI elements are properly displayed and work as intended.

Automating regression tests that don’t involve UI verification is one solution, but the visual verification is a mammoth task. As our company and code base grew, visual verification proved to be unsustainable.

As a good user experience is of utmost importance to Mattermost, we wanted to ensure that our product was being tested by real people who could give valuable feedback. After much research, we chose to leverage the experience and expertise of Rainforest.

How Mattermost uses Rainforest

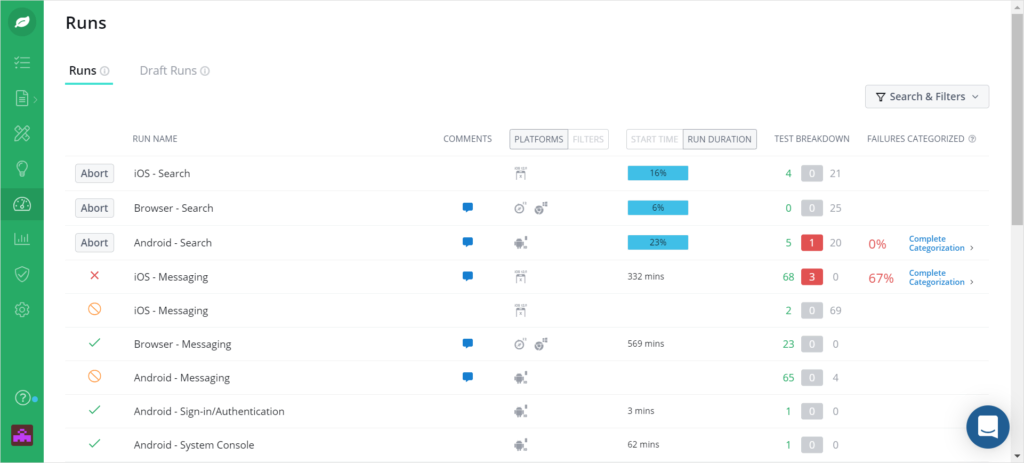

Rainforest’s crowdsourced software quality assurance testers are situated around the globe across time zones and are therefore available at all times. Writing tests in the Rainforest app is simple—you can write them yourself, or you can send test writing requests to the Rainforest test writers.

Over time, we have built up a suite of tests in Rainforest, at present totaling just over 440 tests. For each release, we add new tests to our Rainforest test suite. But we still have a long way to go!

When we started on our journey with Rainforest, our full suite of tests was contained in one gigantic Google spreadsheet. These tests encompass:

- Mobile (Android and iOS)

- Web app (Firefox, Chrome, Safari, Edge, and various combinations of these in mobile browser view)

- Desktop app (Windows and MacOS)

Now that we have transitioned from the above spreadsheet to Test Management for Jira (TM4J), we look back and wonder how we ever navigated around that 30-odd-tab sheet! It is now far easier to transfer tests directly from TM4J into Rainforest.

A helpful integration from Rainforest into Jira is the ability to report bugs directly from a failed test. This saves time by automatically inserting the test steps and area of failure into the Jira ticket.

Our QA Process

Rainforest is not the only tool we use to test our software.

Our Software Development Engineers in Test (SDETs) write and maintain a suite of tests in Cypress that are run against master on a daily basis and against the release branch during the release testing cycle. While Cypress is great for running automated tests against the web app, it’s not possible to run mobile tests. As a result, we have been concentrating on getting all mobile tests into Rainforest.

Of course, there are also unit tests for every pull request that goes into the server, web app and redux repositories. These unit tests help to catch failures and bugs before the pull request is merged and also once the pull request has made its way into master and the release branch.

Tips for using Rainforest effectively

As anyone in the software industry will know, our code base is constantly growing and changing— which means that test coverage needs to do the same. We still have a long road ahead as there are still many tests that need to be written in Rainforest, as well as in Cypress.

However, we’re confident that Rainforest’s newly launched “no code automated testing” feature will help to rapidly scale coverage.

Here are a few things we’ve learned so far while using Rainforest:

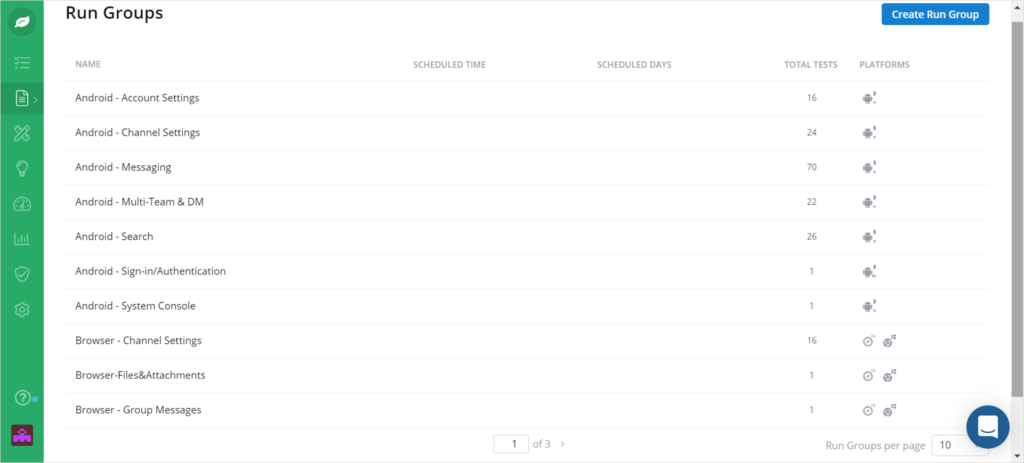

- Start with structure. Before setting up Features, Run Groups, and Tests in Rainforest, ensure you have thought out the structure as it is not so easy to go back and rearrange things afterwards.

- Consider your platforms. Think about the various platforms you’ll be testing on (e.g., mobile, browser, and desktop app) as there will need to be a separate test for each (and two for mobile: Android and iOS)

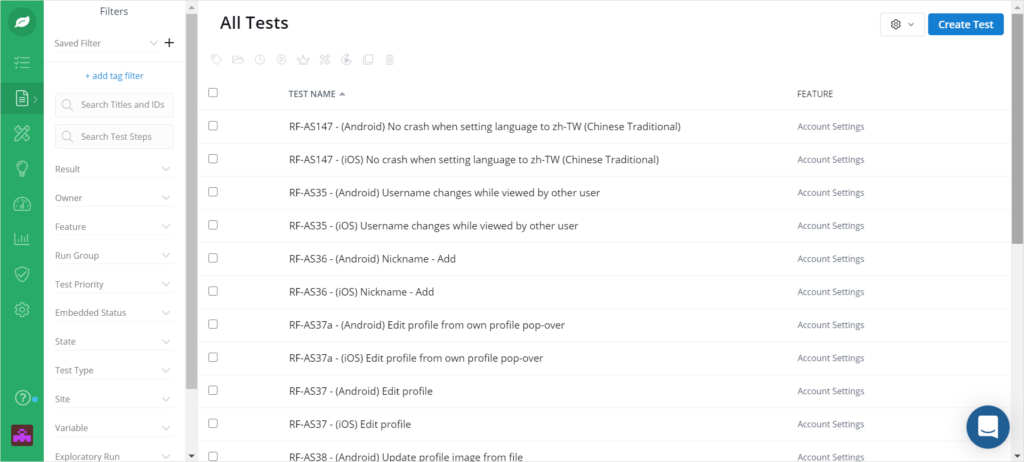

- Create an organizational system. Work out a test numbering system so it is easy to see, at a glance, which platform the test is for and remember that more than one test can have the same number (see the example below)

- This was important for us because we were transferring tests from a spreadsheet into Rainforest and needed a way to note on the spreadsheet that the test was done

- Here’s an example:

- RF-AS23 – (Android) Can reply to a post from the long-press menu

- The letters/numbers at the start of the test name indicate “Rainforest”-Account_Settings_test-number-23 (platform is Android)

- RF-AS23 – (iOS) Can reply to a post from the long-press menu

- The letters/numbers at the start of the test name indicate “Rainforest”-Account_Settings_test-number-23 (platform is iOS)

- RF-AS23 – (Android) Can reply to a post from the long-press menu

- Here’s an example:

- This was important for us because we were transferring tests from a spreadsheet into Rainforest and needed a way to note on the spreadsheet that the test was done

- Write tests. Plan well before embarking on writing tests for crowdsourced testers. Remember that they do not know your software, so test steps need to be very granular and explicit with clearly laid out “expected” results.

- Diligently review all test failures! It’s a great opportunity to keep your test suite current and up-to-date.

The Rainforest team are a bunch of super-fun and helpful folk who are always happy to help with any problem you might have! From the CEO to the customer success and customer support teams— everyone pays great attention to what you have to say and nothing is ever too much trouble. Issues are sorted at lightning speed and always with a smile!

Check out what they’re working on to learn more about testing with Rainforest.