Mattermost’s cloud optimization journey: Pillars of success, future strategies & lessons learned

Mattermost has embarked on a transformative journey in cloud optimization. This journey is marked by strategic initiatives, innovative approaches, and valuable lessons, all aimed at enhancing efficiency and reducing costs.

This blog post explores the successful strategies that have guided our cloud optimization efforts. It also highlights our future direction with an emphasis on ARM/Graviton workloads and shares insights from our experiences, particularly regarding spot instances.

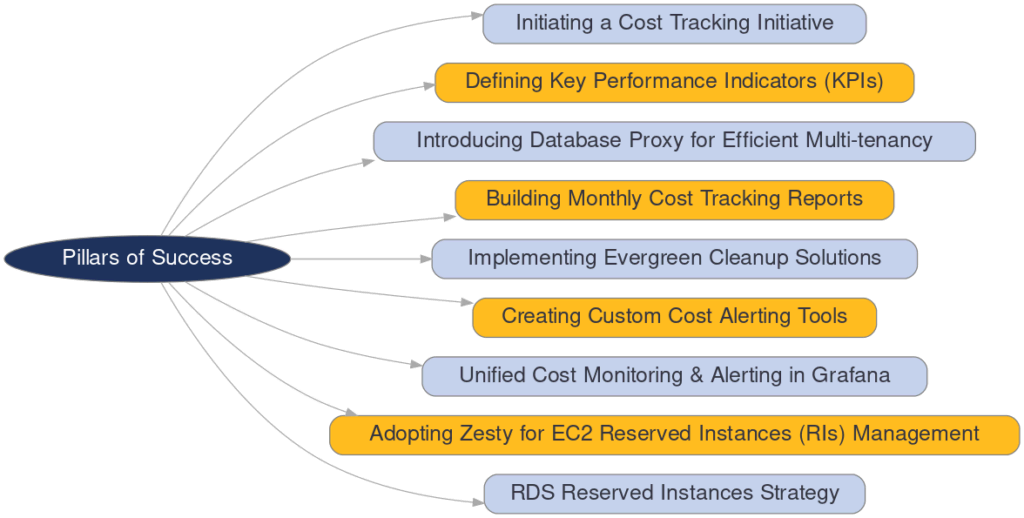

Cloud optimization: Pillars of Success

Initiating a cost-tracking initiative. The journey began with a critical move to systematically track cloud expenses, establishing a foundation for visibility into our cloud spend.

- Defining key performance indicators (KPIs). We introduced measurable KPIs, such as the Average Production Workspace Cost metric, to align optimization efforts with our objectives and monitor spending trends effectively, especially during scaling phases.

- Introducing database proxy for efficient multi-tenancy. By deploying a database proxy, we optimized resource utilization, enabling efficient multi-customer hosting on multitenant databases and reducing costs.

- Building monthly cost tracking reports. We developed advanced tools to produce detailed monthly reports, shedding light on resource allocation and supporting informed decision-making.

- Implementing evergreen cleanup solutions. We utilized AWS Lambda for the automated cleanup of resources like EBS volumes, ELBs, AMIs, and snapshots, minimizing waste and promoting cost efficiency.

- Creating custom cost alerting tools. Initially, custom tools were pivotal for real-time cost monitoring. However, as our mission expanded beyond the cloud infrastructure for our SaaS offering to encompass company-wide cloud optimization, the need for a more scalable solution became evident.

- Unified cost monitoring and alerting in Grafana. The shift towards Grafana for cost monitoring and alerting was significant. This platform, coupled with AWS StackSets, enabled centralized and transparent cost management across the entire organization, reflecting our commitment to proactive, company-wide cost optimization.

- Adopting Zesty for EC2 Reserved Instances (RIs) management. Zesty’s automated solution for managing EC2 RIs significantly improved our cost efficiency by dynamically adjusting our RI portfolio based on actual usage, offering a more strategic approach compared to our previous reliance on AWS Savings Plans.

- RDS Reserved Instances strategy. We adopted a proactive approach to managing RDS Reserved Instances, which are not covered by Zesty. By evaluating our usage on a yearly cadence, we projected our needs for RDS clusters and reserved the necessary instance families ahead of time. This strategy ensured we maximized cost savings while maintaining flexibility in our database operations.

Future strategies

Our strategy embraces the adoption of ARM/Graviton workloads, starting with our databases. The transition required minimal effort thanks to the compatibility with AWS Aurora RDS, which allowed us to leverage these workloads without altering existing operations.

Following this initial success, we focused on adapting our internal services workloads within our Command and Control clusters to support ARM64 and gradually introduced ARM nodes.

Furthermore, we migrated our DNS servers to ARM64 instances, underscoring our commitment to adopting cost-effective and efficient technologies. Looking ahead, we are methodically transitioning more workloads to support both AMD and ARM architectures, optimizing our operations for the future.

Lessons learned: Spot instances

Our experimentation with spot instances for non-critical workloads highlighted the balance between cost savings and operational stability. The unpredictability and management complexity ultimately led us to conclude that the trade-offs didn’t align with our operational objectives, guiding us toward more stable and predictable solutions.

Cloud optimization: Final thoughts

Mattermost’s journey in cloud optimization is a testament to our relentless pursuit of excellence, showcasing our strategic initiatives, future-ready technologies, and the lessons that guide our decisions.

As we continue to evolve and adapt to the latest in cloud technology, we remain committed to sharing our journey, offering insights, and setting new standards in efficient cloud operations.

To learn more about our cloud optimization journey, read this Trimming Operational Costs: Unveiling Mattermost’s Cross-Cluster Migration.