Performance at Scale with Mattermost

In the world of workplace messaging, performance matters most.

Unless your messaging platform can scale to support your entire organization, you will quickly end up with multiple siloed systems, creating artificial barriers between teams.

At Mattermost, we set out to build a platform that supports the world’s largest enterprises. To do that, Mattermost needs to be able to accommodate tens of thousands of simultaneous users, all of whom are using the platform in various ways at the same time.

Real-time messaging platforms have unique scalability requirements compared to systems like email. When someone posts a message to their colleagues, not only does that message need to be sent, it also needs to be rebroadcast to everyone else on the channel simultaneously. Additionally, the platform needs to be able to send out push notifications and email notifications to offline users.

To accommodate these requirements, most popular messaging platforms scale horizontally; teams are distributed on multiple instances. But while employees can communicate with their immediate teammates in these situations, this configuration creates a siloed experience, preventing folks on different instances from using the platform to collaborate with each other directly.

Mattermost is different. Our platform is designed to eliminate siloed communications infrastructure and enable every employee to rely exclusively on Mattermost for all of their collaboration needs. Our goal is to support everyone at an organization—creating the experience of one big team instead of several smaller ones.

This allows organizations to achieve peak productivity.

As we continue to be deployed by bigger and bigger customers, we are ceaselessly working to increase the performance of Mattermost so we can support more users and more messages per minute. We regularly conduct load tests to simulate how Mattermost would perform for large organizations.

To date, our largest simulation included 70,000 concurrent users. Mattermost handled the volume.

Performance: A brief history

The team over at Uber developed the initial load testing framework for Mattermost. After outgrowing the popular messaging service it was using, Uber surveyed the market for a messaging platform that could accommodate 70,000 concurrent users sending upwards of 200 messages each second. Ultimately, Uber’s engineering team decided to create their own internal messaging app, uChat, on top of Mattermost because, after extensive testing, they knew it could handle that much traffic and then some.

Since then, we’ve worked harder and harder to improve performance even further.

Recently, a large financial institution approached us and wondered if our platform could support 30,000 concurrent users running Mattermost on their desktop the first day it was deployed. Our load tests proved that we could—easily.

And you don’t just have to take our word for it, either.

You can run your own load tests to see whether Mattermost can accommodate your organization’s users. The other popular messaging platforms certainly don’t let you do that.

How we got here

Let’s take a look at some numbers and take a peek at some of our tech infrastructure to give you an idea of how we’re able to support so many concurrent users.

We started with some base assumptions taken from real-world observations:

The average user generates about 30 messages and reads about 300 messages every day. When someone sends a message to their colleagues, that message is broadcast between 10 and 30 times, depending on the size of the team (for an average of 20 times).

A typical real-world utilization rate is around 30%, meaning that 100,000 registered users will produce a load, on average, of 30,000 concurrent users.

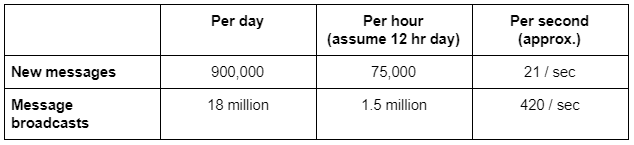

Given that, the load requirements for such an organization would be on the order of:

Supporting that much real-time messaging can be a difficult task.

To accomplish it, we use commodity hardware. More specifically, AWS m4.xlarge standard test boxes. Our standard topology is as follows:

- Load-balancing layer: NGINX servers

- Mattermost cluster: Two to six boxes that enable us to support 20,000 to 60,000 concurrent users; some customers have run 10,000 users on a single box (but we wouldn’t recommend that)

- Database layer: AWS Aurora with one master and two to six read replicas

More details of the topology architecture can be found in the Administrator’s Guide.

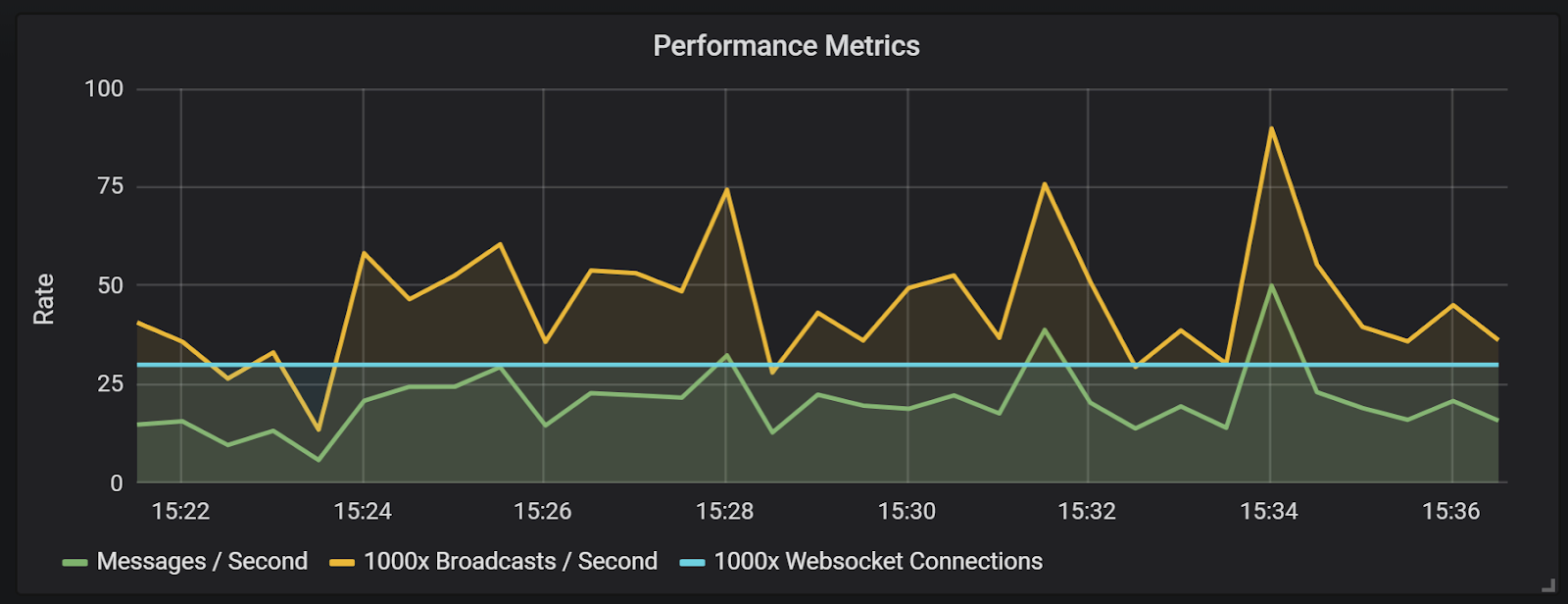

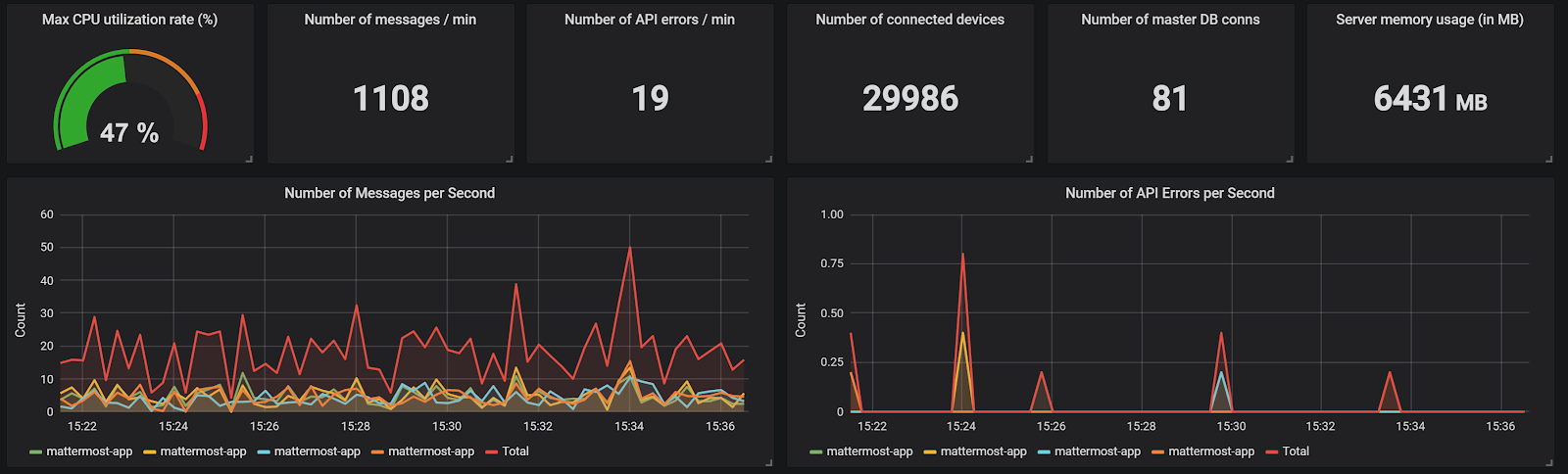

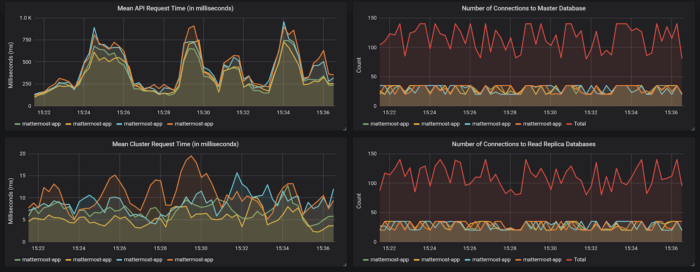

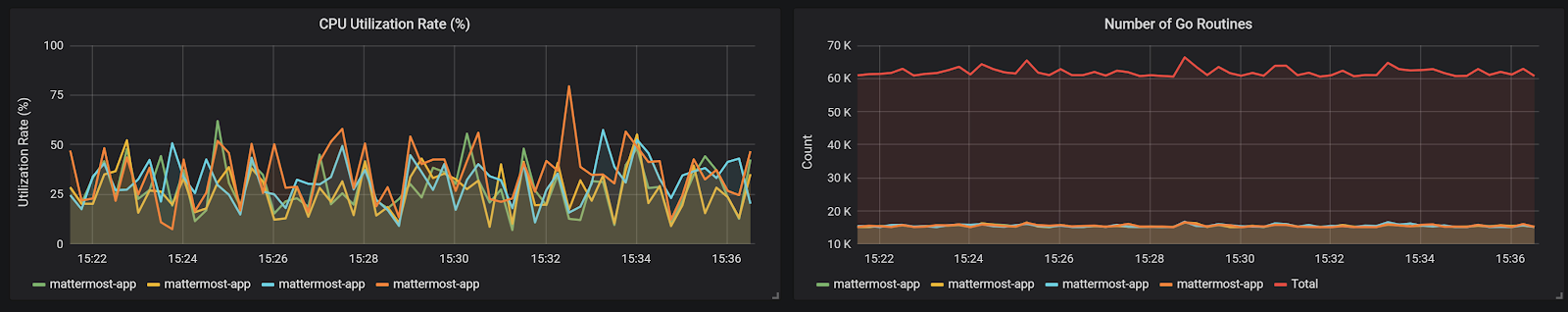

Using this topology, here are the results from a recent extreme load test.

We simulated 30,000 concurrently connected users, with each user posting 30 messages over a 12-hour day. This produces a total of 75,000 messages posted per hour (21 messages per second) on average.

Each message was broadcast to 2,000 users on average (100x higher than an “average” user), with 150 million total broadcasts sent per hour.

As you can see from the mean request times, API errors and Go routines, the system handled this with ease, even under these extreme loads.

But, again, don’t just take our word for it. You can take a look at our GitHub repository to independently run your own load tests to verify all of this—something you can’t do with SaaS products.

High performance with Amazon Aurora

Mattermost is designed to work great out of the box on MySQL, PostgreSQL and Amazon Aurora MySQL.

We were able to quickly set up and run our full load tests using Aurora, which was also used by Uber.

We find that Aurora is a good choice for achieving enterprise scale with low administrative overhead. It provides a distributed, fault-tolerant, self-healing and auto-scaling storage system. You also get low latency read replicas, point-in-time recovery and continuous backup to Amazon S3.

If you are interested in checking it out for yourself, you can easily get started with Mattermost by deploying a Bitnami demonstration server to AWS.

Mattermost is proud to be a member of the AWS partner network.

Looking ahead

Our current goal is to be able to fully support 600,000 users (200,000 concurrent connections) on a single team. That way, even in the largest organizations, an employee on one team will be able to track down the person they’re looking for on another team (e.g., the director of sales for a particular product who they’ve never met)—which isn’t the case on other platforms that silo teams. And if an entire company is watching a war room in Mattermost, for example, there won’t be a risk of the channel going down.

To get there, we’re working on several improvements, including:

- Advanced caching

- Additional database query optimizations

- Improving our load-testing infrastructure to catch more performance issues

Once we deliver those things, our work won’t be done. We’ll always be working to make Mattermost even more scalable and reliable.

And you can help get us there even faster.

If you’re interested in participating more or contributing towards improving Mattermost’s performance—we ultimately want to handle 600,000 concurrent users—head over to our community forum, join the conversation and start contributing to the project! Let’s build the messaging platform of the future together.