Lessons learned while scaling Collapsed Reply Threads

When the first supporting server-side infrastructure for Collapsed Reply Threads (CRT) shipped with Mattermost v5.29 (November 2020), it included an ominous release note:

> This setting is enabled by default and may affect server performance.

While performance concerns are possible with any new feature, most features don’t require significant architecture and data model changes. Most features don’t ship incrementally across 20 monthly releases. And most features – to their credit? – fail fast and somewhat obviously in the face of performance issues. CRT is not most features.

You may have seen in our announcement blog post that we’re excited to be releasing Collapsed Reply Threads in general availability with Mattermost v7.0 and later. The following is a journey through the past few months of performance investigation, fixes, and lessons learned.

Check out our other articles in this series on Collapsed Reply Threads, including:

- Feature Announcement (Collapsed Reply Threads, now generally available!)

- Administrators guide to enabling Collapsed Reply Threads

- 5 ways to be more productive using Collapsed Reply Threads

Why was launching Collapsed Reply Threads so complicated, anyway?

Collapsed Reply Threads changes the fundamental structure of messaging in Channels — specifically how messages are organized, displayed, and marked as unread. The server had to keep track of where a user left off reading in a thread, while also tracking new threads in a channel, and supporting users who didn’t enable the feature or who were using an older mobile client. Oh, and do this without degrading performance or breaking backward compatibility. Incremental schema changes and supporting bookkeeping code had to be shipped to customers months in advance of actually enabling the feature in order to prepopulate with enough data to be immediately useful.

In the end, it truly was a company-wide effort to bring Collapsed Reply Threads to general availability, and we’re excited for you to experience it. You can check out this lightning talk to learn more about some of the hidden complexities of the feature — but for now, let’s talk more about some of the challenges we faced along the way, and how we solved them!

1. Performance monitoring in production is critical.

While the first changes supporting CRT shipped in November 2020, eight additional Mattermost releases would ship before the first user-facing functionality was included in Mattermost v5.37. Even then, this new functionality was hidden behind a feature flag and officially only in beta, in part because we wanted to address known performance issues before making the feature generally available.

But the first sign of trouble came in a plea for help from Customer Success Engineering Manager, Stu Doherty. In an internal post entitled, “General Wave of Performance Concerns,” Stu connected with engineers to summarize observations by some of our larger customers. At the time, CRT wasn’t the only feature under scrutiny: experimental support for timezones and permalink previews had both exposed performance concerns, almost obscuring CRT in the ensuing investigation.

And yet among the observations shared was the following graph from a customer’s performance monitoring system:

We encourage all enterprise customers to configure Mattermost for Performance Monitoring, leveraging Grafana and Prometheus along with our custom charts to jumpstart investigation into any performance concerns. To my surprise and joy, this particular customer had gone to the extra effort of also wiring up their database for performance monitoring.

In the graph above, we see a huge and sustained increase in InnoDB row updates – on the order of 1000x over the baseline. Very few database deployments are designed to stay online through such a withering load, and very few performance regressions generate such a strong signal. Once we learned that the chart corresponded with an upgrade to Mattermost v5.37, we knew to narrow our search window to changes involving database writes introduced with that release.

Finding and reproducing this issue without the above performance monitoring data would have been possible, but far more difficult. Performance monitoring in production is critical.

2. Test Your Feature Flags

Given the beta release in Mattermost v5.37, we suspected CRT, but this customer had never enabled the beta functionality. How could CRT have had an impact?

Armed with the knowledge that something had changed in regards to the write semantics of the Mattermost server, it didn’t take long to root cause the issue. On servers with CRT disabled, every time users switched channels to read new messages, the server would mark as read both the channel and all threads in that channel. This bookkeeping made it easier to turn CRT on in the future, but turned a single database write into possibly hundreds or even thousands of writes.

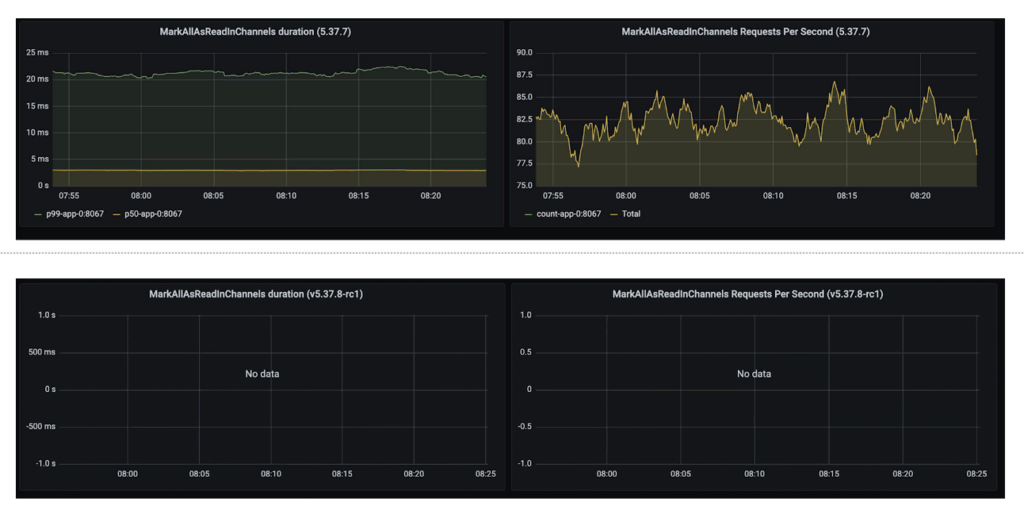

Fortunately, this bookkeeping functionality was controlled by a feature flag. Unfortunately, the code that triggered these additional writes did not check the feature flag. To remedy the immediate performance concerns, we shipped v5.37.8 with a patch to allow customers to fully disable CRT. This time around, we tested the feature flag by load testing the patch and proving that the affected code was no longer being called:

For additional peace of mind, we also disabled this feature flag by default for new Mattermost installations until we could address the performance issue.

3. Prefer complex reads over unnecessary writes

The previous root cause analysis not only identified the problem but suggested an obvious next question: did we actually need to mark all threads as read? Previously, the code to decide which threads should be updated ran the following query, returning all threads for that user in the given channel:

SELECT ThreadMemberships.PostId

FROM ThreadMemberships

JOIN Threads ON Threads.PostId = ThreadMemberships.PostId

WHERE Threads.ChannelId IN (:channelIDs)

AND ThreadMemberships.UserId = :userID;But in practice, only a small number of threads needed to be marked as read. With a small addition to the query, we can narrow the set to threads with new replies since last being read:

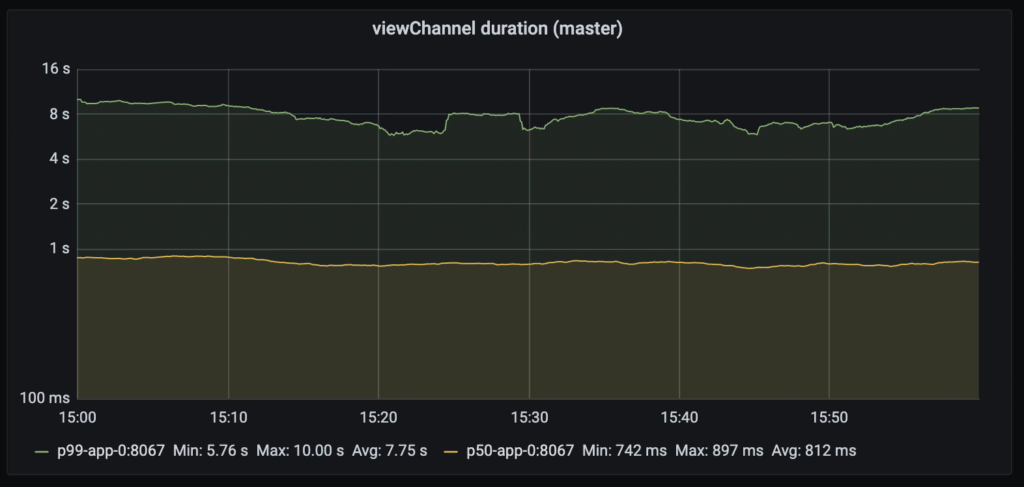

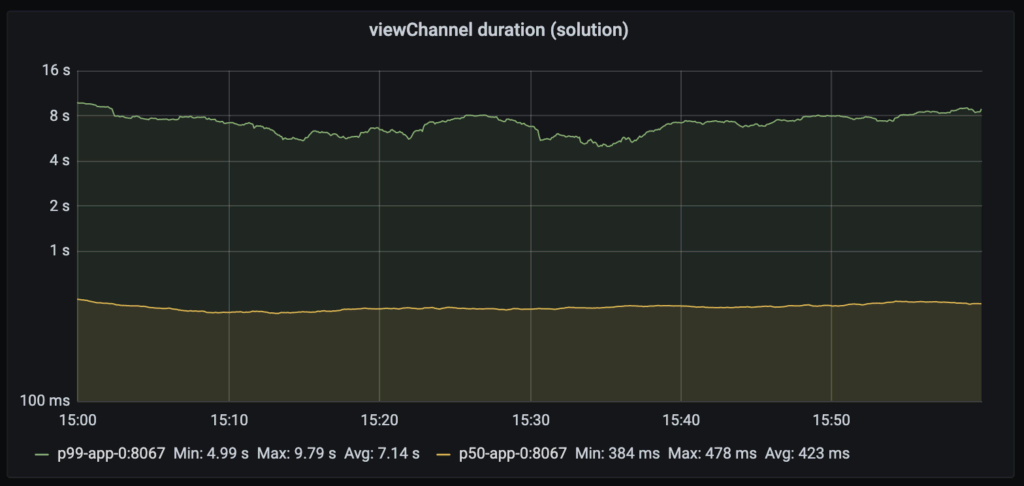

AND Threads.LastReplyAt > ThreadMemberships.LastViewedAlthough this read is slightly more complex, the tradeoff in avoiding unnecessary writes is dramatic. Before the change, load testing recorded on average 800ms to view channels:

After the change, the average time halved:

This improvement gave us the confidence to re-enable the feature flag controlling the bookkeeping functionality by default.

4. Measure, Optimize, and Repeat

After finding, fixing, and shipping a performance improvement, we knew we had to keep up the momentum and finally address the known issues in the beta release. We had theories as to what was slow, but first, we needed actionable data.

After extending our load testing framework with support for CRT and refactoring database calls to measure execution time more granularly, the major culprits stood out quite clearly:

- One query was guilty of triggering a sequential scan on the very large Posts table.

- Many queries ran slowly due to joining with the Posts table to filter out deleted posts.

- Some queries were entirely redundant!

- Many read-only queries relied exclusively on the master database instead of spreading out load among any configured replicas.

In all, we shipped seven major changes to address performance issues with CRT, with the vast majority of the time spent repeating load tests and quantifying the results.

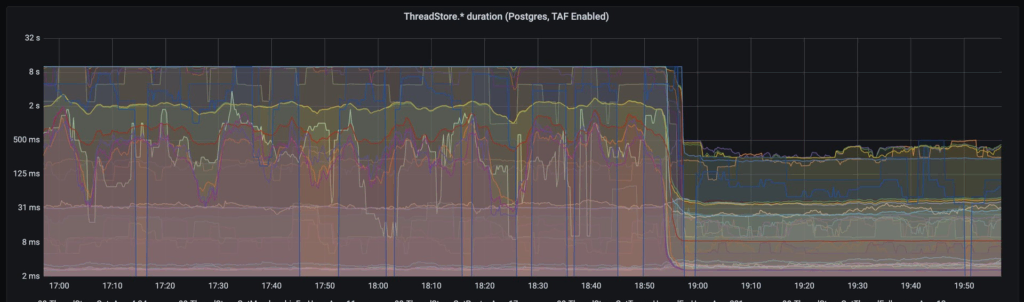

It was a major investment from both a people and infrastructure standpoint, But it was all worth it to see this graph out of one of those load tests:

Note the logarithmic scale! In light of these results, we did not find it necessary to suggest any additional hardware resources when enabling CRT. Check out the Administrators guide to enabling Collapsed Reply Threads to learn more about enabling this feature on your own self-hosted server.

Are you interested in joining our team and helping us drive more performance improvements like these? Check out these open roles for our engineering team.